����ý Research & Development has been at the forefront of applications of augmented reality (AR) and virtual reality (VR) in broadcasting since the early days of virtual studios, bringing some of the magic that this technology enables to programme production. One example from this work is the sports graphics system that is used by the ����ý and many other broadcasters around the world for post-match analysis.

We are now looking at capturing content that can be delivered directly to users for experiencing in 3D environments such as games, rather than just passively watching conventional video.

2D or not 2D? Exploring different approaches

One strand of this work involves investigating the use of volumetric capture technology. This is ideal for intimate performances of one or two artists under controlled lighting conditions and allows participants to view the performer from any location.

We are also researching approaches using broadcast cameras as a part of our work in . This approach may be better suited for a larger number of performers, with complex stage and lighting conditions, at the expense of a less 3D feel and a more limited range of viewpoints.

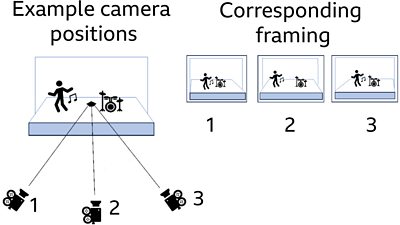

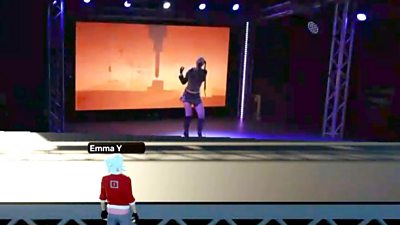

To give the audiences who are participating ('players') the illusion that the performer is in the space with them, our aim was to produce a realistic effect using video billboards (video projected onto 2D planes). The main disadvantage of video billboards is that they are flat, and the perspective doesn’t change as the player views them from different angles, breaking the illusion of 3D. To counteract this problem, we are filming the performer(s) simultaneously from multiple angles and swapping between the video feeds depending on the position of the player.

We recently completed a live capture trial with four forward-thinking music artists who were willing to play live for us, be streamed into our 3D test worlds, and allow us to film to the performances for subsequent development of the technology, and for further trials as part of our MAX-R work.

Trial with live music performances

We collaborated with studios and to conduct our first technical trial and media shoot of music artists. They performed and were streamed live into a 3D space hosted by our project partner . The trial was directed and produced by ����ý R&D’s Fiona Rivera with Spencer Marsden as technical producer. Three solo artists and one band were enlisted and performed with full stage lighting, dynamic graphics displayed on an LED wall backdrop, with some occasional haze. Collaborating with Production Park gave us the opportunity to use real live event stage technology for the artists. This let us to test our capture and streaming solution for potential integration with 3D environments under realistic yet controllable performance conditions, and to film media for further enhancements to our approach.

We used three locked off cameras (fixed position and settings) pointing centrally, and to either side of the stage for our trial. Although not essential, we also used a roaming camera to capture closeups that could optionally be displayed on large virtual video screens placed to either side of the virtual stage, as would be the case in music festivals.

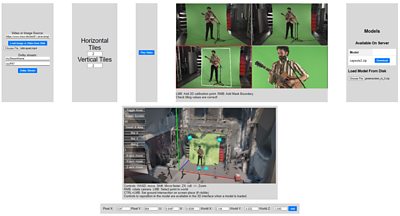

To accurately reflect the viewing angles within the 3D world, we need to know the physical location of the video cameras relative to the stage. To achieve this, we created some calibration software that matches points in the video images to known points in the 3D model of the performance space. When at least four points are set, the software can then work out the position and field of view of that video camera.

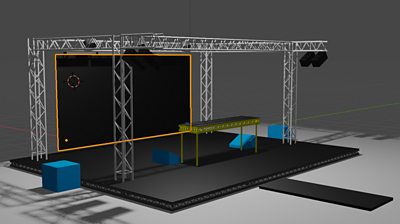

Our colleague Bruce Weir also developed a Blueprint Visual Scripting Class customised for a test environment in that can read in the camera calibration information described above and accurately project video billboards into a 3D environment. We chose Unreal Engine because of its compatibility with Improbable’s metaverse platform. An adaptation of this Blueprint was also integrated into a testbed environment on their platform using a scale 3D model replica of the stage to be used in our trial.

We used a 7m x 5.7m stage set, with lights and LED wall rigged from a truss construction. The LED wall was a set of 10 x 5 Roe Black Pearl panels, driven by a Brompton Tessera S4 running at low latency, with Resolume software controlling video playback. We also tested using Unreal Engine as a triggered content generator with one of Production Park's projects. Additional LED wall graphics were provided by our graphics team, and in some cases, the artists’ teams.

Lights were programmed and controlled to be bold, and synchronised with the musical content, not only highlighting the artist but also helping define the stage dimensions. As haze is used extensively in live events, it was also an ideal opportunity to see how it affected the capture pipeline.

The sound was prioritised for artist on stage monitoring or in-ear where preferred, with some sub bass units to keep it vibey and familiar onstage. As there was no in-person audience in the studio, we did not rig a public address (PA) system, this also helped keep operational audio levels manageable for the crew.

The technical trial

We aimed to use our multi-camera setup to stream live performances into two stripped-back 3D environments. We wanted to check the practicalities of our capture set up, along with what improvements we might need to make to the capture and streaming process before launching user trials with purpose-built 3D environments reflecting a live music event.

While filming live, we merged four video feeds into a single quad image for streaming to our Unreal application and simultaneously to our partner Improbable’s 3D testbed. This was largely due to trying to keep steaming bandwidth down to a minimum so as not to cause undue latency in the 3D testbeds, and to ensure that all video feeds remained synchronised with the audio. The quad included video feeds from the three locked off cameras for the virtual video billboards of the performers, designed to be switched based on position of the users / players (top left, top right, and lower left). The lower right of the quad was used for the feed from close ups provided by the roaming camera offering optional media for supplementary side screens.

Conclusion

We successfully streamed into our basic test environments with the video quad sections from three locked-off cameras showing the performers on the virtual stage. The video feed from the lower right of the quad supplied the roaming camera shots for the virtual side screens. Our test users (who joined the virtual event online through Improbable’s platform) were able to move around the environment as avatars while watching and listening to the performers. Although not included as a focus of the trial, the users could also interact with each other through audio and text and express themselves through some dance and clapping movements.

We plan further explorations of the technical side of capturing and streaming and to improve the transitions between camera views within the 3D spaces. We also plan to develop a 3D environment complete with interactive functionalities for user trials of live immersive music events.

Acknowledgements

We would like to thank the music artists - Badliana, KYDN, New Wounds, and TWST - for their willingness to perform live for our trial, and to their teams who supported them.

We also would like to thank our hosts Production Park and crew provided by Academy of Live Technology, including Production Park leads Ian Caballero, Connor Stead, and Aby Cohen MA tutor, Deryck Jones (LED wall tech), Joshua Warnes (LED wall tech), Euan Macphail (sound), Reece Burdon (kit), Tom Stokes (behind the scenes photography), James Ward (KYDN graphics), Alexander Chun (lighting tech).

Our thanks also go to Alex Landen and the team at Improbable for supporting and hosting the technical trial. Images of the live studio that are used throughout this article are courtesy of Tom Stokes.

The ����ý MAX-R on set crew included Fiona Rivera (trial director and lead producer), Sonal Tandon (DoP, TWST segment director), Thom Hetherington (production management, camera op), Spenser Marsden (technical producer), Jack Reynolds (streaming and sound), Maxine Glancy (streaming & artist liaison), and Alia Sheikh (lighting and graphics). Our technical team included Bruce Weir who developed the capture and calibration approaches, with Paul Golds supplying expertise in Unreal Engine Blueprints and environments, and Peter Mills in streaming setups. Thanks also go to our ����ý colleague Thadeous Matthews for Badliana graphics.

MAX-R is a 30-month international collaboration co-funded by Horizon Europe and Innovate UK, Grant agreement ID: 101070072.